What is a Sitemap Checker?

XML Sitemap Finder is a tool to check if a site has an XML map.

You can use Google Sitemap Checker to check if your website has an XML website map and to identify any of its errors. If the tool finds any bugs, it will tell you how to fix them.

The XML Sitemap Checker can assist you in several key areas:

- It verifies the presence of an XML file on your site.

- It detects any problems within your website map xml file.

- It enhances the efficiency with which search engines index your site.

Key Features of Sitemap Tester

Unified Dashboard. A unified dashboard provides a single view of technical SEO data, making it easy to track and manage their performance.

User-friendly Interface. The user-friendly interface makes it easy to use Sitechecker, even if you are not a technical expert.

Complete SEO Toolset. The Sitemap Lookup Tool is part of a complete SEO toolset that can help you improve your website’s ranking in search engine results pages (SERPs).

How to Use the XML Sitemap Finder

Fortunately, there’s no need to manually check your website’s code to understand whether it has the file that lists a website’s essential pages. With the help of Sitemap Detector, you can look up any site to get this information.

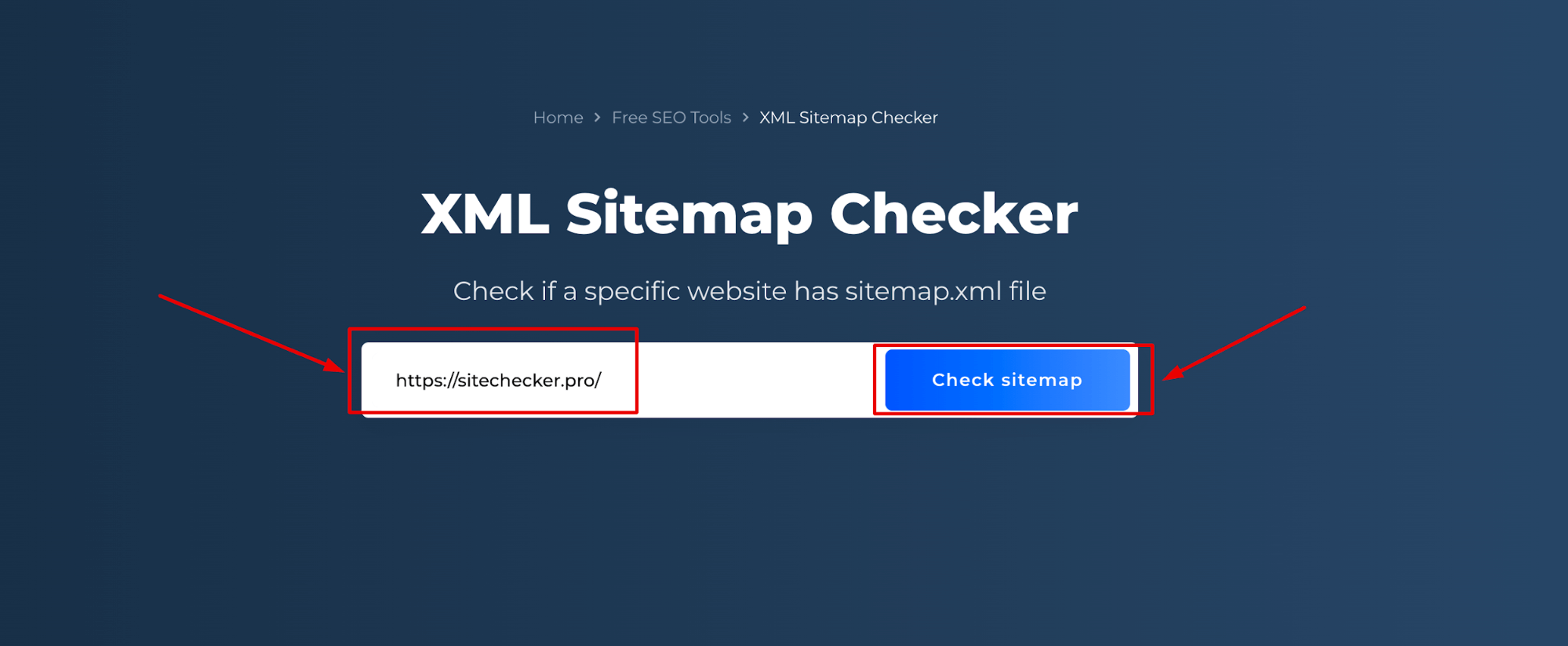

Step 1: Insert your domain name

The first step is entering the URL of your website:

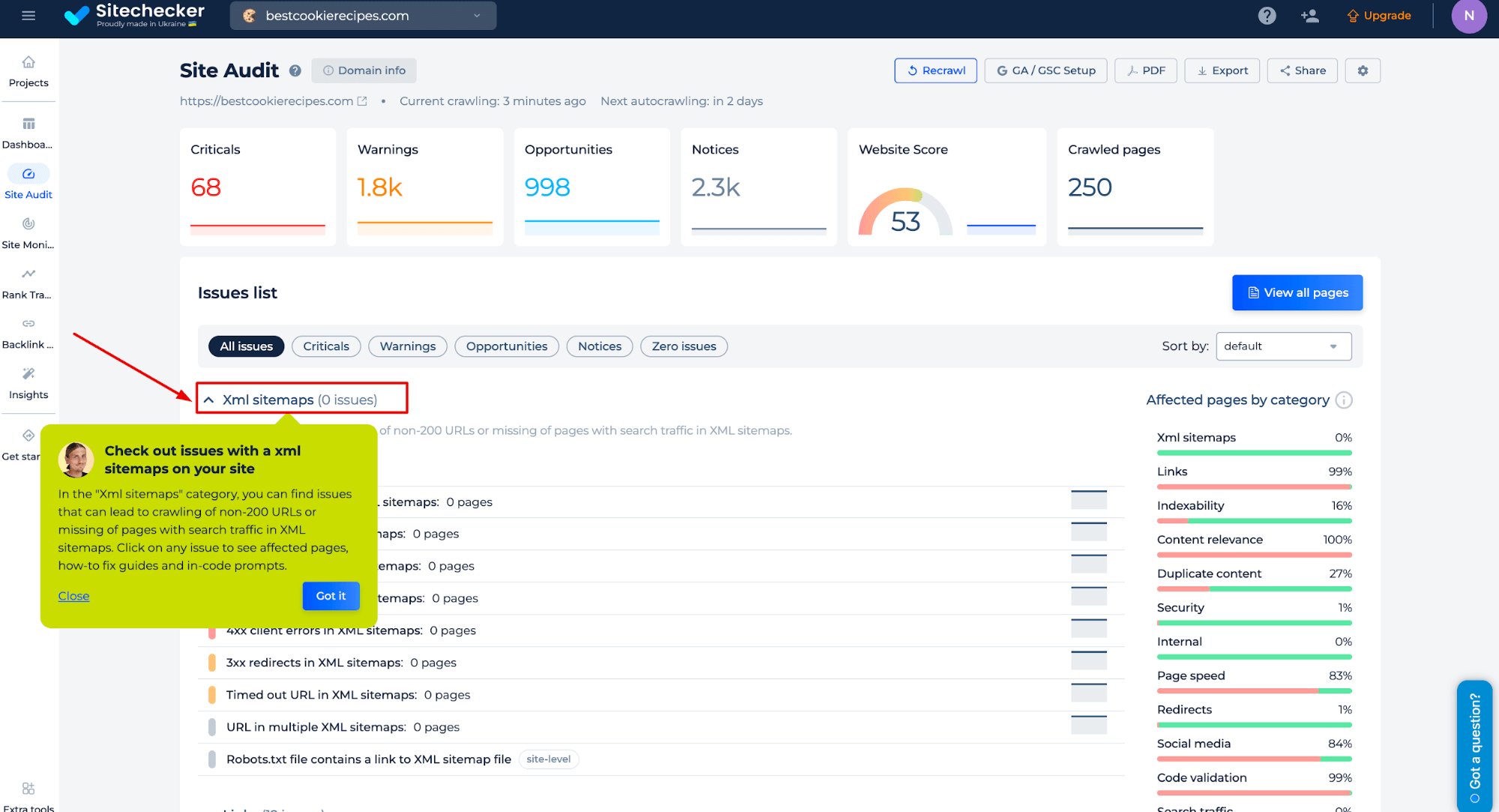

Step 2: Interpreting the Sitemap Tester results

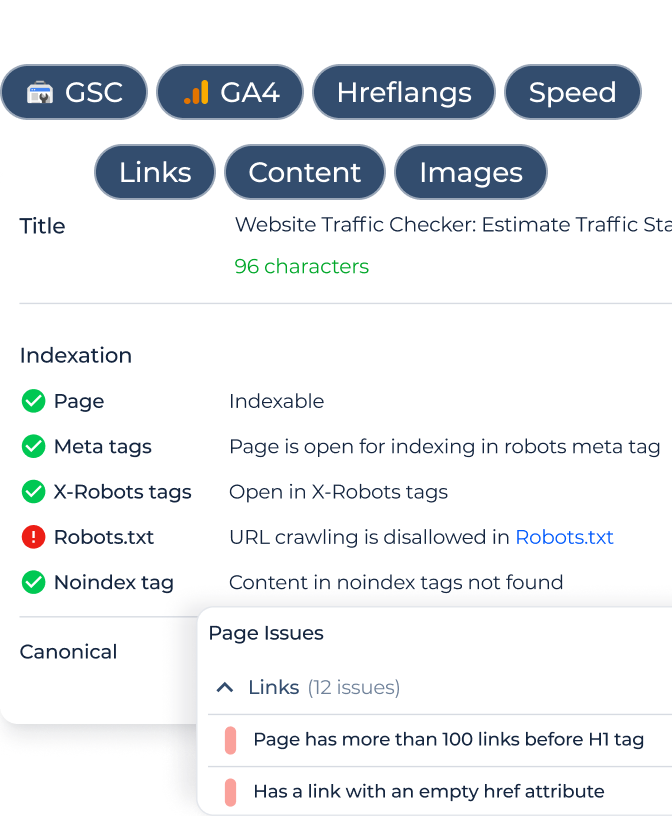

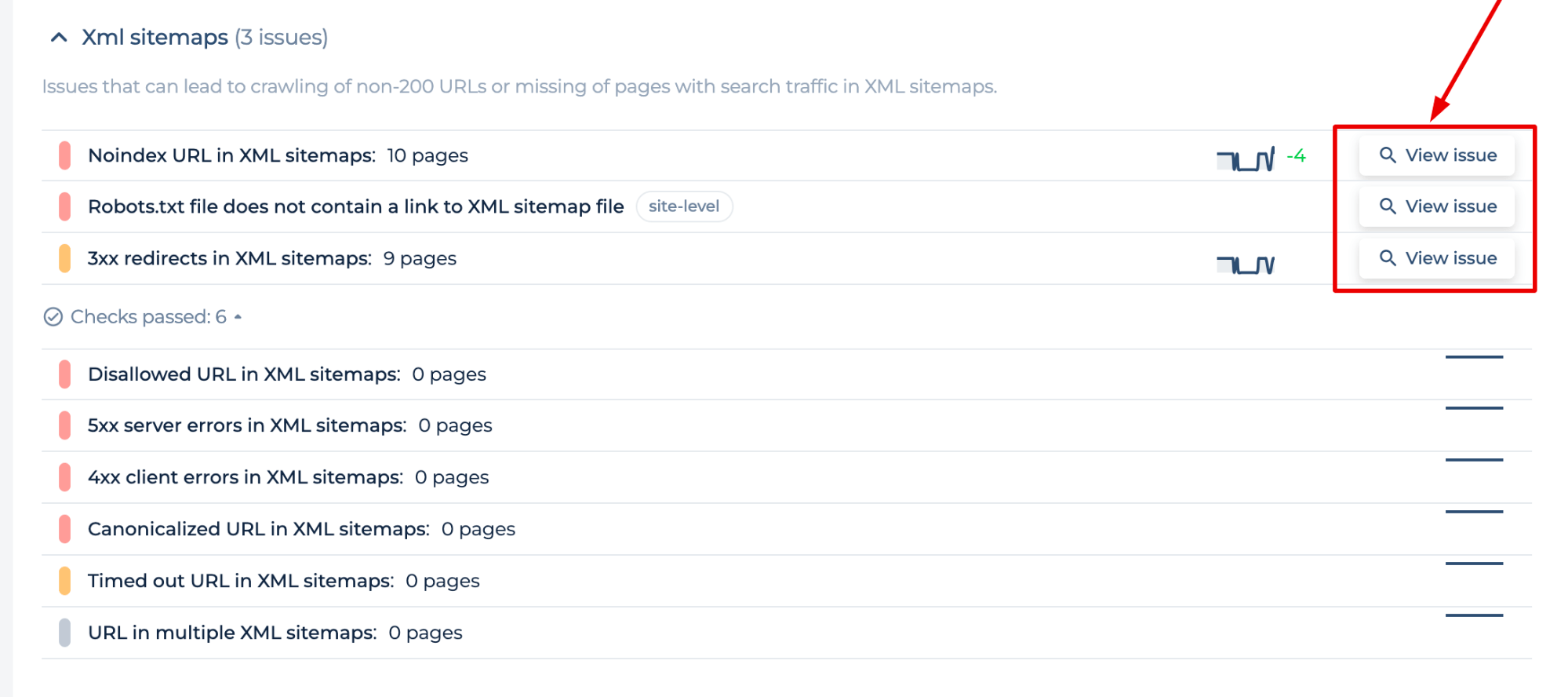

The scan you perform generates a site audit for the domain you enter. In the “XML sitemaps” category, you can find issues that can lead to the crawling of non-200 URLs or missing pages with search traffic in XML maps. Click on any issue to see affected pages, how-to-fix guides, and in-code prompts.

The sitemap tester allows you to identify sitemap errors and get instructions on how to solve them to reduce sitemap errors affect on your indexing:

- Non 2xx status code URL in sitemap (3xx redirects, 5xx server errors, 4xx errors)

- Noindex URL and Disallowed URL

- Robots.txt file with no link to XML sitemap, URL in multiple XML, Too Large Sitemap.xml File, etc.

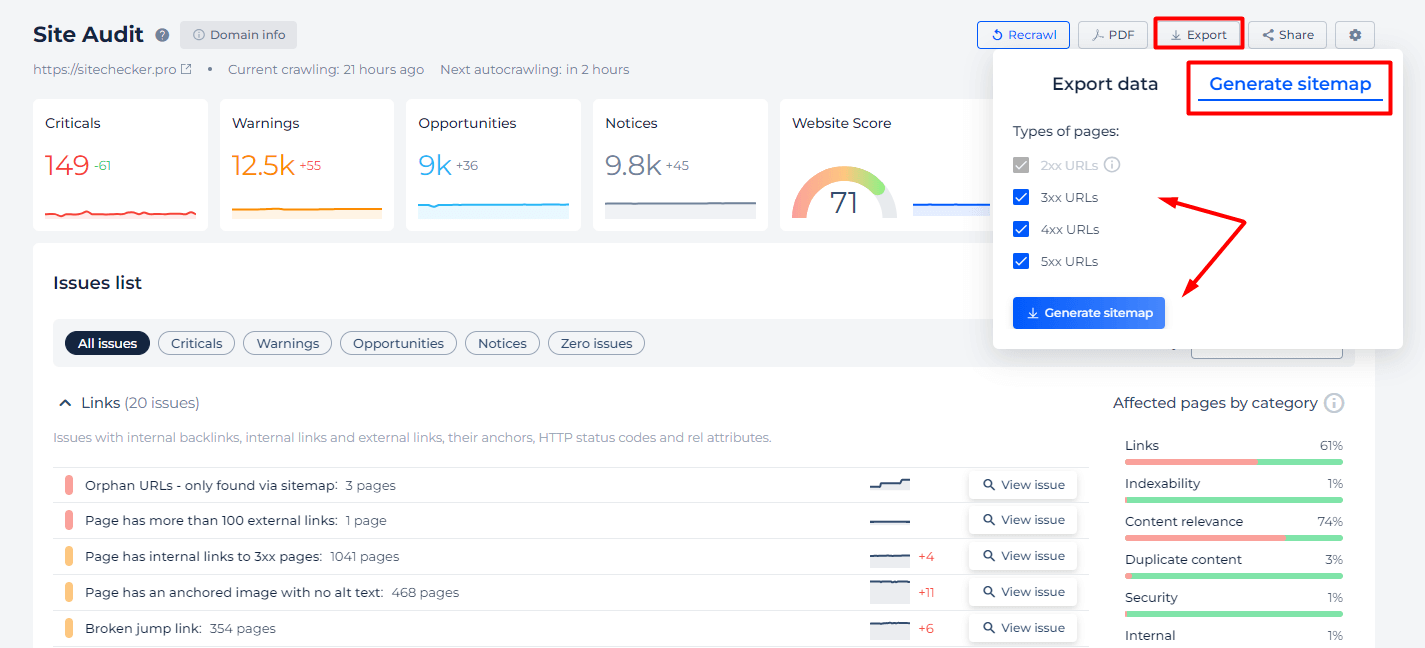

Additionally, we offer specific features in case you’re unsure whether all pages are included in your sitemap, or if you simply wish to use our tool as an XML sitemap validator. Use the “Export” and “Generate sitemap” tabs to select the types of URLs by status code that should be included in your map.

Additional features of XML sitemap checker

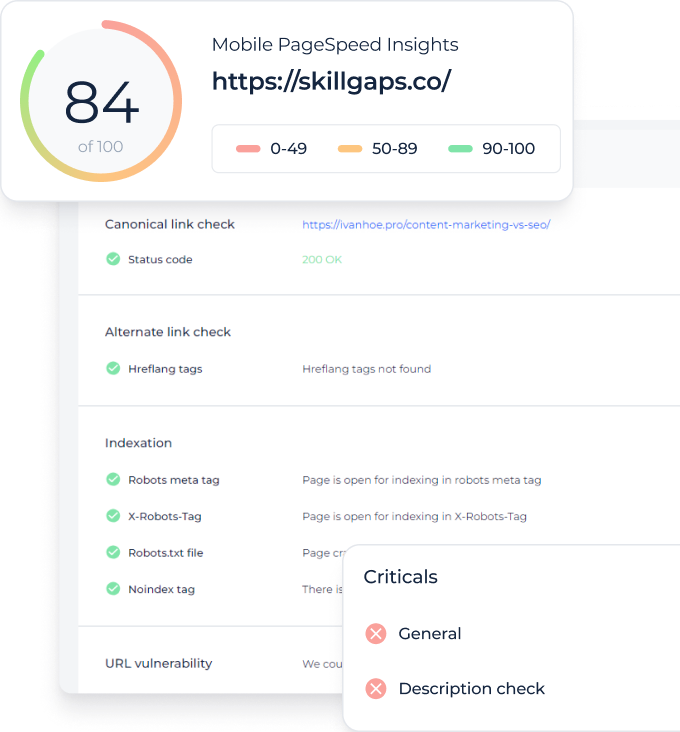

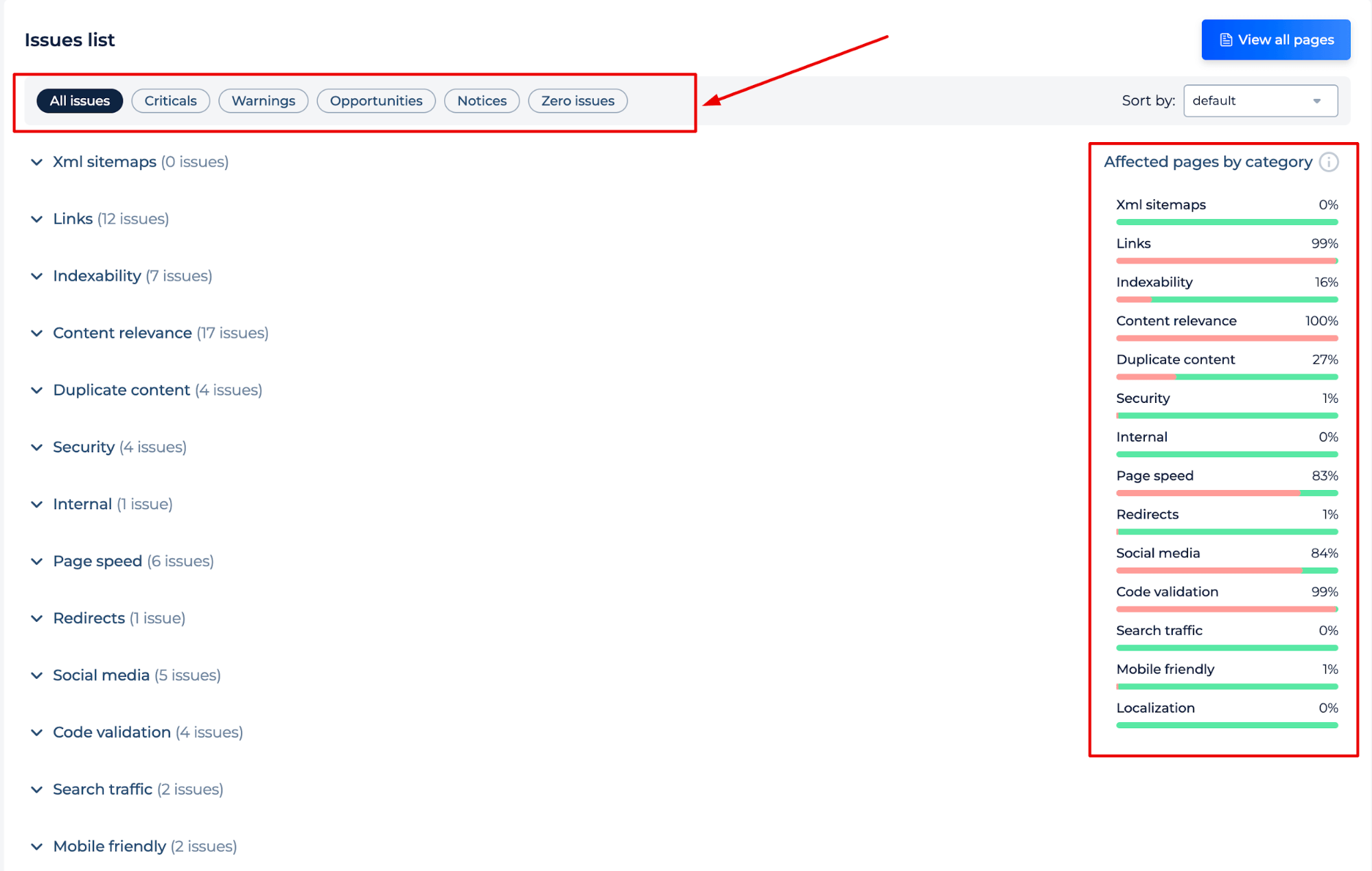

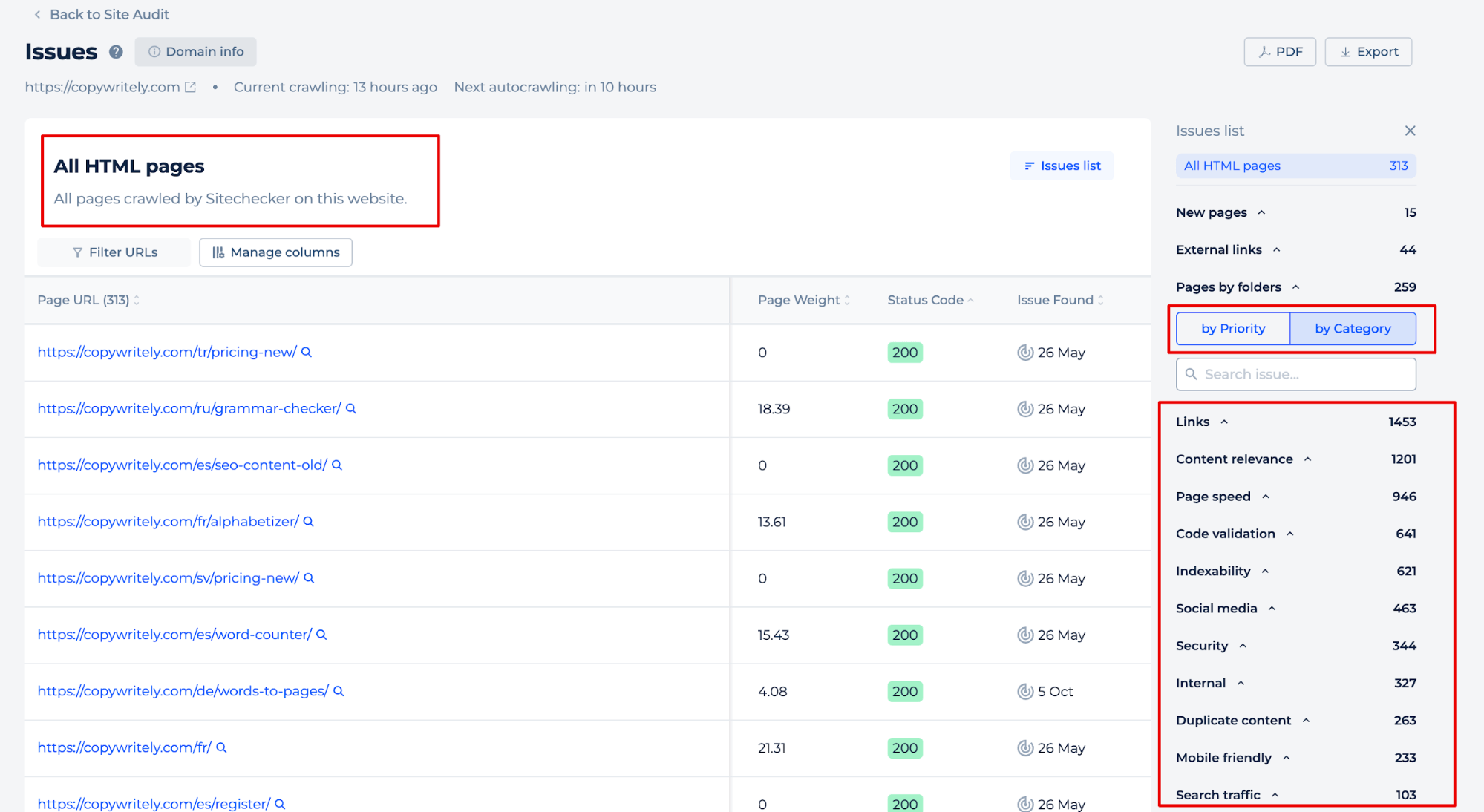

After site crawling, you will also receive a comprehensive site audit report, highlighting any potential problems and providing instructions on how to fix them. The report is sorted by issue type or category, allowing you to efficiently address the most crucial issues for your site’s success.

By clicking the ‘View All Pages’ button, you can identify issues on individual pages. Sort issues by priorities or categories or manually add data to the pages that you are interested in.

Final Idea

The Website Sitemap Checker is a tool designed to ensure that a site has an XML file, identify any errors present, and help enhance the site’s search engine indexing efficiency. It offers a user-friendly interface within a unified dashboard for easy SEO management, as part of a complete SEO toolset. The tool simplifies the process of checking for a site map by entering a domain name and analyzing the results, which include a comprehensive audit report with detailed instructions on fixing identified issues. This aids in maintaining a well-structured and SEO-optimized site.