Sitechecker SEO Blog

Stay ahead in SEO with our Sitechecker SEO Blog. Explore expert tips, latest trends, and powerful strategies to boost your website’s visibility!

A focused, cost-efficient SEO platform for agencies. Why YIPI chose Sitechecker

Read how YIPI cut critical technical SEO issues by 30 to 50% and reduced audit time by up to 30% by switching from Semrush to Sitechecker.

Ivan Palii

May 12, 2026

AI brand mentions in client reports. Why Dogwood stick to Sitechecker

Ivan Palii

May 11, 2026

One platform for technical SEO and on-page checks. Why Druce Digital chose Sitechecker

Ivan Palii

May 7, 2026

Show more posts

SEO

Interviews

View More Posts

Interview

Nik Vujic on the Webflow vs WordPress for SEO and Running B2B SaaS Agency

Ivan Palii

Sep 17, 2024

Interview

SEO Tips from Spencer Haws, SEO Blogger and Founder of NichePursuits.com

Ivan Palii

May 17, 2021

Marketing

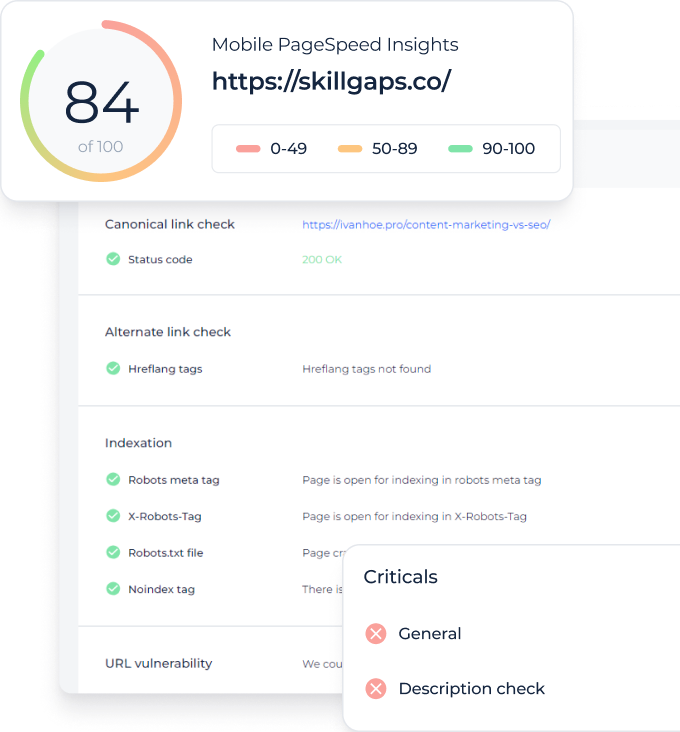

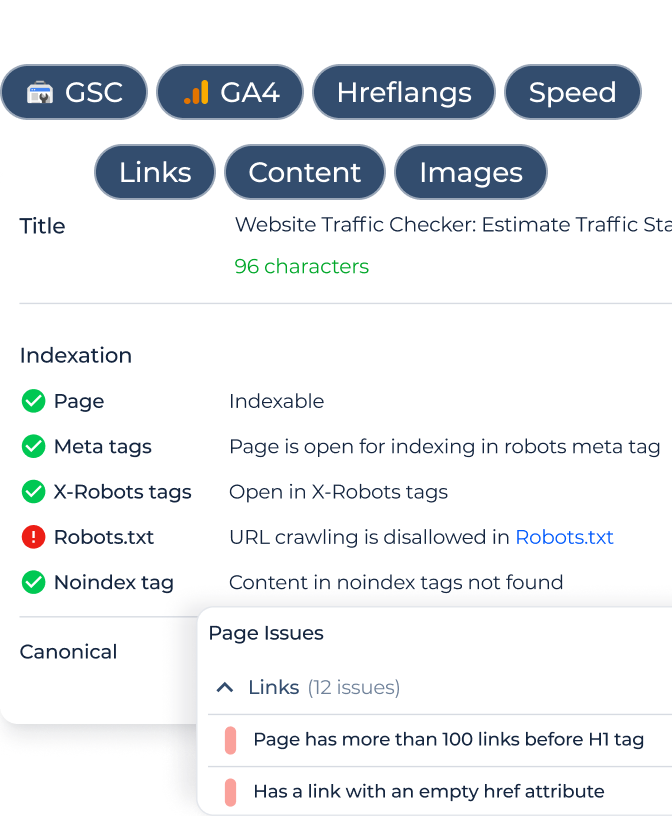

View More PostsOptimize your site's health & unlock potential with our SEO Checker & Audit Tool

Improve website’s on-page and technical SEO

Monitor all your website’s changes 24/7

Monitor your website rankings

Monitor and analyze all the backlinks