In the realm of web development and internet communications, 4xx status codes play an essential role. Part of the Hypertext Transfer Protocol (HTTP) response status codes, the 4xx category is a group of standard responses that signal client-side errors.

When a server returns a status code from the 4xx class, it indicates an error related to the request made by the client (often a web browser). These types of errors typically suggest that there’s an issue with the request sent to the server and not with the server itself. This differentiates them from 5xx status codes, which point to server-side errors.

The purpose of these 4xx status codes is to inform the client that it needs to modify or correct its request in some way before the server can fulfill it. In other words, these status codes provide a way for the server to communicate that it couldn’t understand or process the client’s request due to the client’s own error.

4xx status codes are quite diverse, each communicating a different kind of client-side error. For instance, a ‘400 Bad Request’ status code means that the server couldn’t comprehend the order due to invalid syntax. A ‘404 Not Found’ status, possibly the most well-known of the bunch, is sent when the requested resource is not available on the server. A ‘403 Forbidden’ status, on the other hand, indicates that the client doesn’t have the necessary permissions to access the requested resource, despite the server knowing the client’s identity.

Incorporating 404 Errors Monitoring ensures that you can promptly detect and address these errors, particularly the 404 Not Found errors, which are most common. This helps improve user experience, prevent SEO issues, and maintain your website’s performance by actively resolving client-side errors.

4xx Status Codes and SEO

When discussing 4xx status codes in the context of SEO (Search Engine Optimization), it’s important to understand that these status codes represent errors, and frequent or persistent errors can negatively impact a site’s search engine rankings. Search engines aim to provide the best possible user experience, and that includes providing links to resources that are available and useful.

Here’s a look at how common 4xx status codes can affect SEO:

| HTTP Status Code | Description | SEO Impact |

|---|---|---|

| 400 | Bad Request | Repeatedly encountering this error can discourage search engine bots from crawling your website, potentially leading to a negative impact on your SEO. |

| 401 | Unauthorized and 403 Forbidden | These status codes tell search engine crawlers they can’t access the content. Pages with these status codes are typically not indexed, so they won’t appear in search results. |

| 404 | Not Found | Search engine crawlers will remove 404 pages from the index over time, so this error can lead to a loss of visibility in search results. In addition, if a significant portion of a site’s pages return 404 errors, search engines might deem the site as less reliable or lower quality, which could affect the site’s overall search ranking. |

| 405 | Method Not Allowed | While not typically a significant issue for SEO, frequent 405 errors could potentially discourage search engine bots from crawling your website, which may impact your SEO. |

| 429 | Too Many Requests | This error indicates to search engine crawlers that they’re requesting too many resources too quickly from your site. If search engines are frequently presented with this error, they may slow or cease crawling of your site, potentially negatively impacting your SEO. |

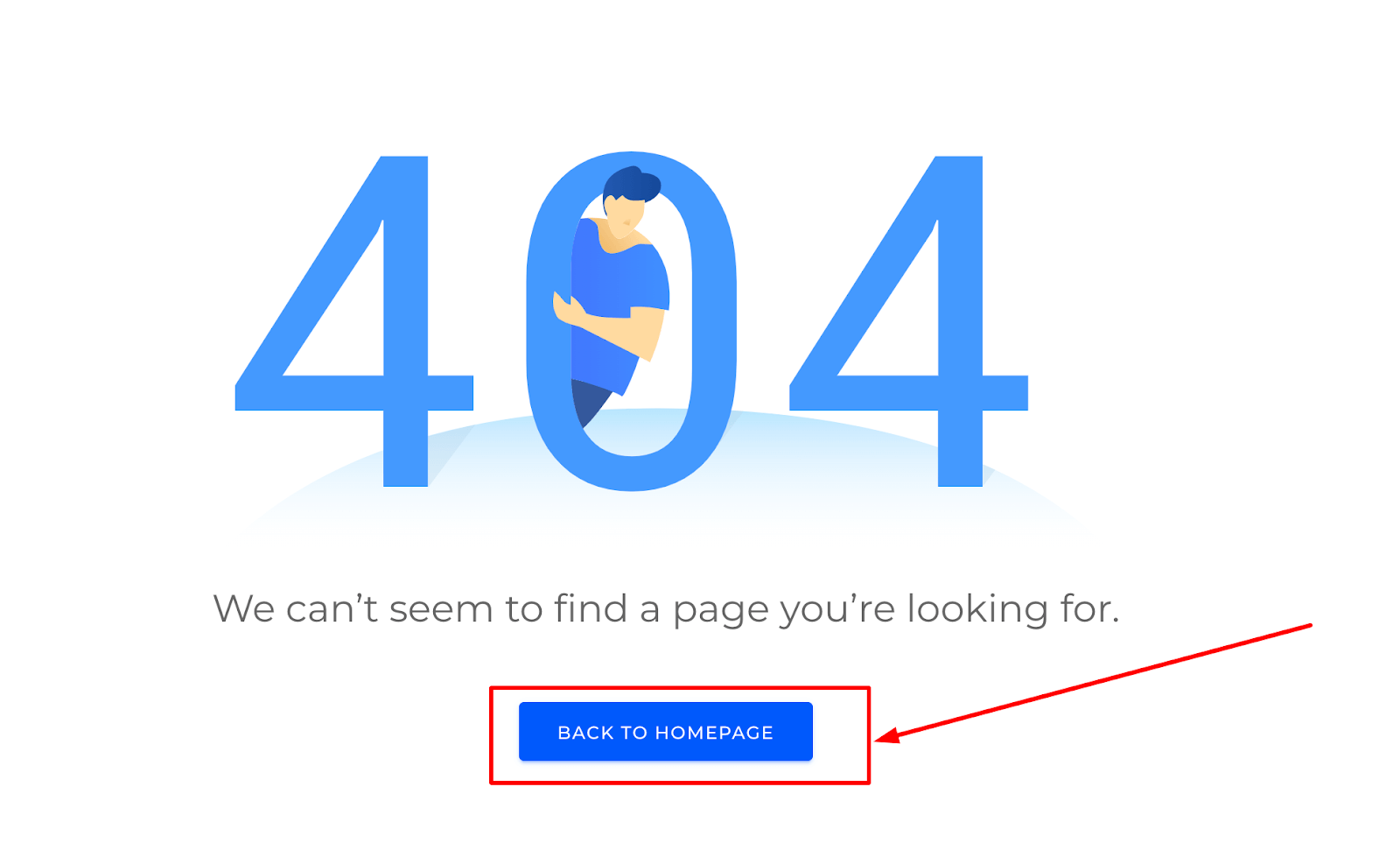

Also, for unavoidable 404 errors, consider implementing custom 404 pages that help users navigate back to a working page on your site. This can improve user experience and reduce the potential negative impact of 404 errors on your site’s SEO:

To mitigate the potential negative SEO impact of 4xx status codes, site administrators should regularly check their websites for these errors. This can often be done through server logs or SEO tools that crawl your website in a similar manner to search engine bots. Once identified, efforts should be made to resolve these errors, which can often involve correcting misconfigured server settings or repairing broken links.

Detailed List of 4xx Status Codes and How to Fix Them

Understanding HTTP 4xx status codes is vital for troubleshooting website issues. These codes indicate client-side errors, where the user’s browser has made an invalid request. Here’s a concise list of common 4xx status codes, their meanings, and typical uses to help you manage these errors effectively.

Let’s discover in details:

| HTTP Status Code | Name | Description |

|---|---|---|

| 400 | Bad Request | This means that the server could not understand the request due to invalid syntax. |

| 401 | Unauthorized | This means the client must authenticate itself to get the requested response. It’s used when authentication is required and has failed or has yet to be provided. |

| 403 | Forbidden | The client does not have access rights to the content, i.e. they are unauthorized, so the server is refusing to give the requested resource. Unlike 401, the client’s identity is known to the server. |

| 404 | Not Found | The server can not find the requested resource. This is often the most well-known code used when the server can’t find the page/document. |

| 405 | Method Not Allowed | The method specified in the request is not allowed for the resource identified by the URL. |

| 408 | Request Timeout | This response is sent on an idle connection by some servers, even without any previous connection by the client. |

| 429 | Too Many Requests | The user has sent too many requests in a given amount of time (“rate limiting”). |

| 451 | Unavailable For Legal Reasons | HTTP status code that indicates the server is denying access to the resource due to legal restrictions or censorship. |

Understanding 4xx status codes is crucial for anyone working with web servers, developing web applications, or working with SEO. Knowing what these codes mean and how to resolve the issues they indicate can drastically improve the user experience and the efficiency of client-server communications.

400 Bad Request

A 400 Bad Request is an HTTP status code that indicates the request sent to the server is invalid or malformed and cannot be understood or processed by the server. The server is unable to understand the request, and therefore it cannot be fulfilled.

This error can occur for several reasons, including:

- Syntax error in the request. If the request made by the client has an incorrect syntax, the server will not be able to understand it and will return a 400 Bad Request status. This could be due to incorrect punctuation, misspelling, improper formatting, or incorrect command sequences.

- Invalid request message framing. If the framing of the HTTP request message is not correct, the server may be unable to understand the request and return a 400 Bad Request. This could be due to the incorrect use of delimiters or other control characters.

- Deceptive request routing. If a request is attempting to trick the server into thinking it’s a different kind of request than it actually is, the server may reject it with a 400 Bad Request.

- Invalid or expired cookies. Sometimes, if the client has invalid or expired cookies that the server cannot understand, a 400 Bad Request may occur.

- File size too large. If the request sent by the client is too large for the server to handle, the server may return a 400 Bad Request. This is common when trying to upload a file that exceeds the maximum file size limit.

The best way to troubleshoot a 400 Bad Request error depends on what caused it. In many cases, it can be as simple as checking the URL for mistakes or clearing the browser’s cookies. In more complex cases, like with issues in the request framing, it might involve a detailed examination of the request being sent to identify any syntax errors or deceptive request routing.

401 Unauthorized

The 401 Unauthorized is an HTTP status code that signals that the request made by the client requires authentication and has failed or has yet to be provided.

There are several reasons you might see a 401 Unauthorized error, including:

- No Authentication. The client made a request for a protected resource, but did not provide any authentication with the request.

- Failed Authentication. The client attempted to authenticate but provided invalid credentials (like a wrong username or password).

- Insufficient Permissions. The client successfully authenticated but does not have permission to access the requested resource.

A 401 Unauthorized response must include a WWW-Authenticate header field that defines the authentication method to access the requested resource. For example, the server might include a header like WWW-Authenticate: Basic, which means the server expects the client to authenticate using the Basic authentication scheme.

To resolve a 401 Unauthorized error, the client typically must provide valid authentication credentials with the request. If the client has already provided credentials, they may need to check to ensure that they are correct. If the client does have the correct credentials and is still receiving a 401 Unauthorized error, they may not have the necessary permissions to access the requested resource.

403 Forbidden

The 403 Forbidden is an HTTP status code that indicates the server understood the request but refuses to authorize it. This status is similar to 401 Unauthorized, but indicates that the client must authenticate itself to get the requested response.

Key points about a 403 Forbidden response:

- Valid Credentials but Insufficient Permissions. A 403 Forbidden error implies that the client is authenticated successfully but does not have the right permissions to access the requested resource.

- Authorization will not help. Unlike a 401 Unauthorized response, a 403 Forbidden response indicates that providing valid authentication credentials will not help gain access to the resource.

- May be temporary or permanent. The 403 Forbidden status code can either be temporary or permanent, depending on the nature of the resource or the server configuration. For instance, a resource may only be temporarily locked or secured due to ongoing administrative tasks.

To resolve a 403 Forbidden error, you must first determine why the server is refusing the request. The reasons may vary; it could be server rules that restrict access to the particular resource, or user permissions might not be set up correctly. If you’re the server’s owner or administrator, checking the server’s configuration and the permission settings should be your first steps. However, if you’re a client trying to access a website or service, you may need to reach out to the website’s owner or administrator for assistance.

404 Not Found

The 404 Not Found is an HTTP status code that indicates that the server was unable to find the requested resource. This error response is probably the most recognizable and common of all HTTP status codes, often seen when a webpage or URL is no longer available on a website.

Here are the key points about a 404 Not Found response:

- Resource Does Not Exist. This code is typically used when the server cannot find the specific resource that was requested by the client. The resource may have been deleted or moved to a different URL, and the link has not been updated.

- Server is Operational. The 404 status code doesn’t imply a server error. In fact, it confirms that the server is functioning correctly. It’s simply reporting that it was unable to find the requested resource.

- Temporary or Permanent. A 404 Not Found error can be either temporary or permanent. Sometimes, a resource is only temporarily unavailable due to updates or changes being made on the website. However, in most cases, the error indicates that the resource is permanently unavailable at the specified URL.

The most common way to resolve a 404 Not Found error is to ensure the URL is entered correctly. Typos or errors in the URL are common causes of this error. If the URL is correct, then the resource may have been moved or deleted. If you’re a website owner or administrator, you’ll want to ensure your site’s links are updated regularly to prevent 404 errors. If you’re a user, you might try reaching out to the website owner if a resource you expect to be available is returning a 404 error.

405 Method Not Allowed

The 405 Method Not Allowed is an HTTP status code that indicates the request method is known by the server but has been disabled and cannot be used for the requested resource.

The key points about a 405 Method Not Allowed response are as follows:

- Valid Methods but Not Allowed. The HTTP method used in the request (such as GET, POST, PUT, DELETE, etc.) is recognized by the server, but it is not allowed for the specific URL that an application is trying to interact with.

- Mismatched Method and Resource. A common scenario where a 405 Method Not Allowed status code might be returned is when the method used is not designed for the type of the requested resource. For example, using a GET method on a form which requires data to be presented via POST, or using PUT on a read-only resource.

- Server Requirement. The server must include an Allow header in a 405 Method Not Allowed response to inform the client about the request methods that are supported for the resource. For instance, allow: GET, POST, HEAD.

To fix a 405 Method Not Allowed error, the client needs to change the request method to one that’s appropriate for the requested resource, as indicated in the Allow header field in the server’s response. The appropriate method will typically be documented in the API or web service’s documentation. If you’re the server’s owner or administrator, you should ensure the server’s configuration allows the necessary HTTP methods for each resource.

408 Request Timeout

The 408 Request Timeout is an HTTP status code that indicates that the client did not produce a request within the time that the server was prepared to wait. Essentially, the server timed out waiting for the request.

Here are some key aspects of a 408 Request Timeout response:

- Slow Client Request. A 408 Request Timeout status code typically means the client took too long to complete its request. This might occur because of a slow network connection, the client is busy with other tasks, or a variety of other reasons.

- Server Timeout Limits. Every server has its own timeout limit, which may vary based on server configuration, server load, network traffic, and other factors. If a request isn’t completed within this limit, the server might return a 408 Request Timeout.

- Can Be Resent. When a client receives a 408 Request Timeout, it’s generally safe to attempt the same request again. However, the client should be prepared to handle the same timeout response if the server is still unable to process the request in a timely manner.

To resolve a 408 Request Timeout error, you might first try to simply make the request again. However, if the error persists, it might be due to issues with a slow or unstable network connection, in which case those issues will need to be addressed. If you’re a server administrator and you’re seeing frequent 408 Request Timeout responses, you might need to adjust your server’s timeout settings or look into optimizing server performance.

429 Too Many Requests

The 429 Too Many Requests is an HTTP status code that indicates the user has sent too many requests in a given amount of time (“rate limiting”).

The main points about a 429 Too Many Requests response are:

- Rate Limiting. This response is used for rate limiting. Servers use these responses to let the client know that they’ve exceeded a rate limit and that they need to slow down. This is important to prevent abuse and maintain the health and quality of service of the server.

- Temporary Block. It’s generally a temporary block, and the client can resume their requests after a certain period of time.

- Retry-After Header. Servers that implement rate limiting using the 429 Too Many Requests response might include a Retry-After header to indicate how many seconds the client should wait before retrying.

To resolve a 429 Too Many Requests error, the client should respect the Retry-After header value if provided and slow down the rate of requests to the server. If this doesn’t work, the client may need to reach out to the server administrator for more information.

In a broader context, consider implementing exponential backoff when making requests to an API. This involves progressively lengthening the delay between retry attempts, reducing the load on the server and increasing the likelihood that the request will eventually succeed.

451 Unavailable For Legal Reasons

The 451 Unavailable For Legal Reasons is an HTTP status code that indicates the server is denying access to the requested resource due to legal restrictions or censorship.

Key points about 451 Unavailable For Legal Reasons:

- Legal Restrictions. This response is used when the server is legally obligated to block access to a resource, such as due to government regulations or court orders.

- Censorship. It may be used in cases where content is restricted based on political, religious, or other sensitive reasons.

- Transparency. The status code provides transparency by informing the client that the resource is unavailable specifically due to legal reasons.

- Limited Impact. The response is typically specific to certain jurisdictions and may not affect all users accessing the resource.

To address a 451 Unavailable For Legal Reasons error, the client should respect the server’s decision and refrain from attempting to access the resource. Contacting the server administrator or seeking legal advice may be necessary for further information or resolution.

It’s important to note that the availability of this status code can vary depending on the implementation and policies of the server or platform.

Common Problems and Solutions of 4xx Status Codes in SEO

404 Not Found Errors

401 Unauthorized and 403 Forbidden Errors

400 Bad Request Errors

405 Method Not Allowed Errors

429 Too Many Requests

Remember that regular website monitoring for these errors can help maintain your SEO ranking. SEO tools that crawl your website similar to search engine bots can be beneficial in identifying and addressing these issues.

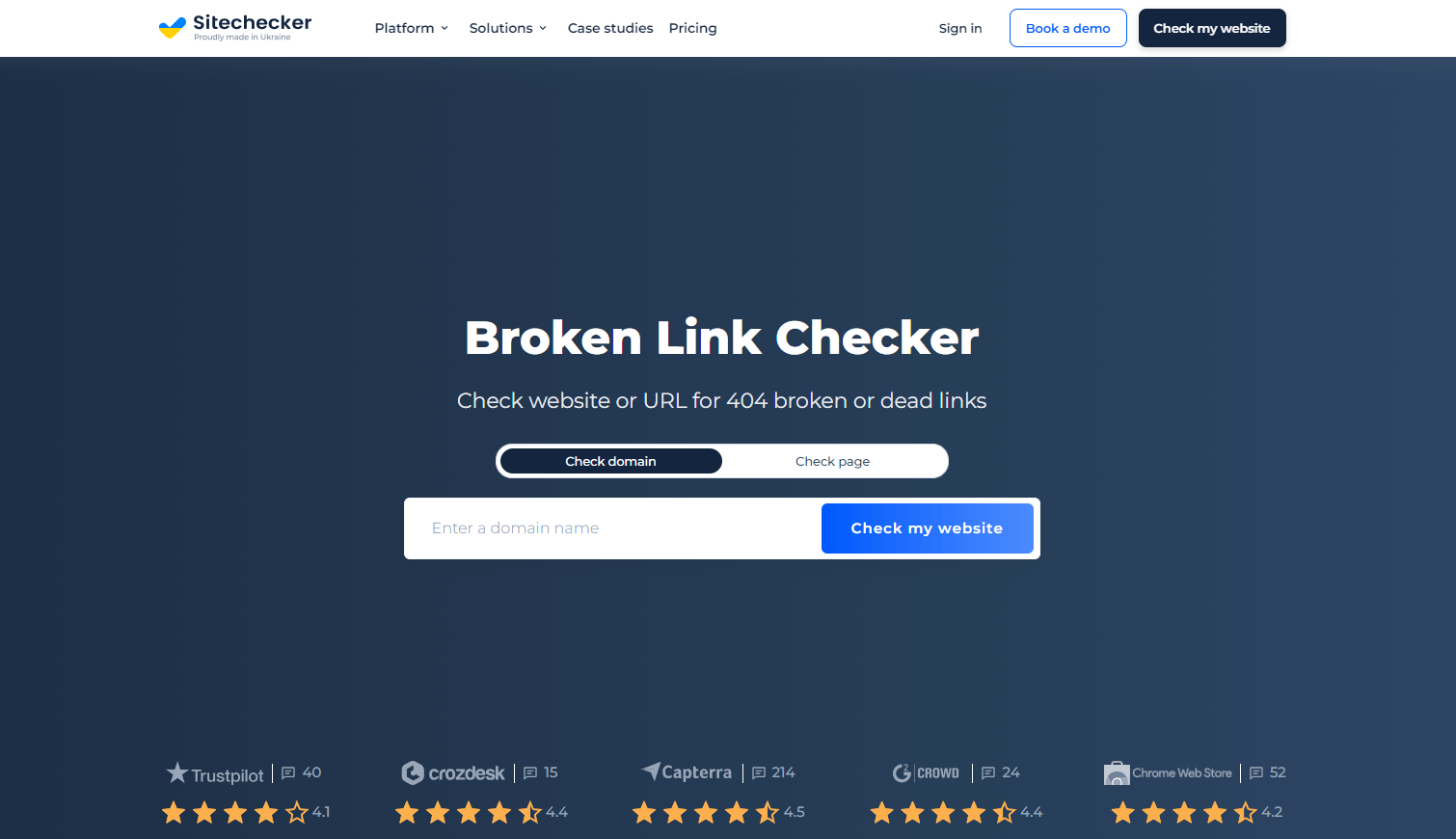

Detect 4xx Issues with Free Broken Link Checker

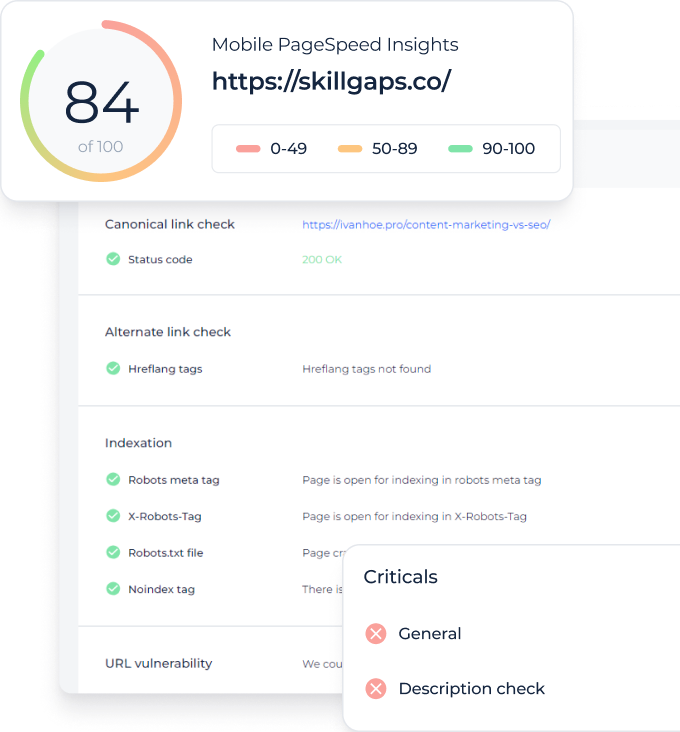

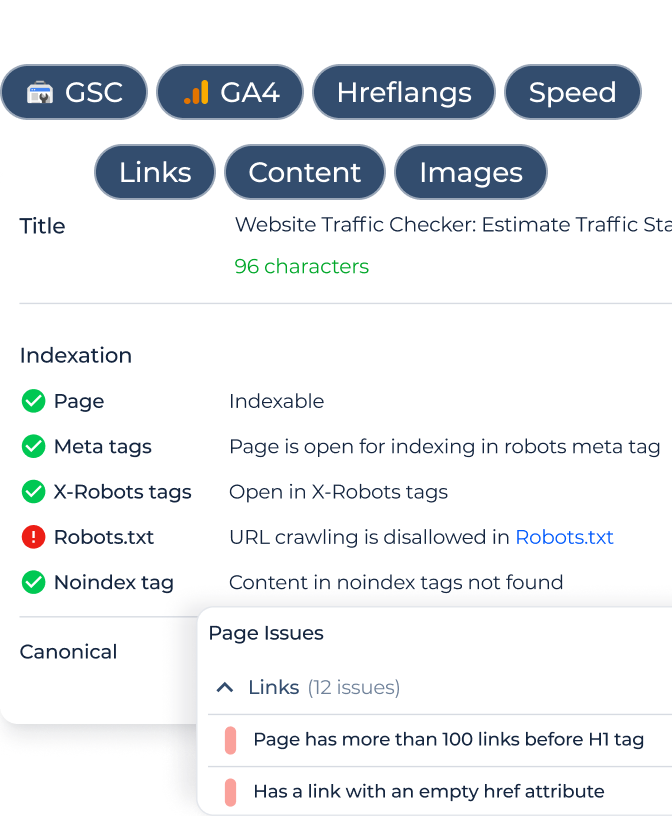

Broken Link Checker is a handy SEO tool for identifying and fixing 4xx errors. It operates like a search engine bot, scanning your entire website to find and flag any links that return 4xx status codes. After the scan, you receive a detailed report of all broken links, including the problematic URLs and their specific 4xx status codes. Sitechecker further assists by helping you prioritize the most critical issues and providing advice on how to resolve them. Running a rescan after implementing fixes ensures that all problems have been properly addressed.

Conclusion

4xx status codes indicate client-side errors in web development. They inform the client about issues with their request and suggest modifications. These errors can negatively impact SEO, affecting search engine rankings. Common examples include ‘400 Bad Request’ and ‘404 Not Found.’ To mitigate the impact, it’s advised to implement custom 404 pages and regularly check for errors.