What is Noindex Tag?

The “noindex” tag is a directive used in SEO to instruct search engines not to index a particular webpage. When a search engine’s crawler encounters this tag on a page, it knows not to include that page in its search results.

Here are some examples of when you might use the meta noindex tag:

- A “Thank You” page after a user completes a purchase.

- A landing page for a paid ad campaign.

- A page that is under construction or not yet ready for public viewing.

- A duplicate page that is already indexed from another website.

- A page that contains sensitive or confidential information.

This tag can be implemented in two ways:

- HTML Meta Tag: This is placed within the <head> section of an HTML document.

<meta name="robots" content="noindex">- HTTP Header: It can also be sent as an HTTP header:

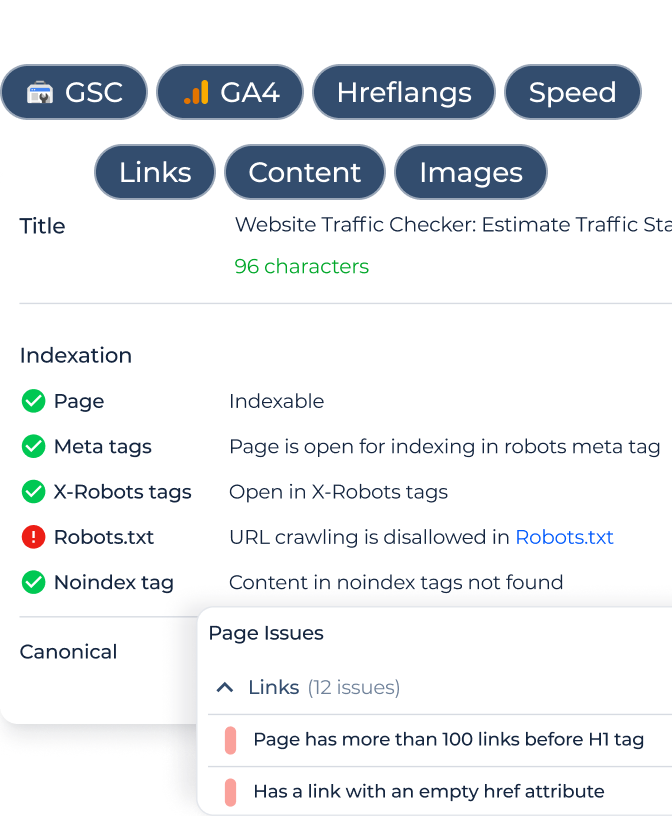

<meta name="robots" content="noindex">- Robots.txt: Although the “noindex” tag isn’t officially supported in the robots.txt file, many people mistakenly believe it is. Instead, the robots.txt file is used to prevent crawlers from accessing specific paths or URLs, but it doesn’t prevent indexing if a page is linked from elsewhere.

It’s important to remember that while “noindex” tells search engines not to index a page, it doesn’t prevent them from crawling it. If you want to prevent indexing and crawling, use both “noindex” and “nofollow” tags.

Differences Between “noindex” to Other Meta Tags Like “nofollow,” “noarchive,” and “nosnippet”

The directive is one of several meta tags or directives that can be used to guide search engine behavior on specific web pages. Let’s explore how it differs from similar tags:

noindex:

Purpose: Tells search engines not to index the page. This means the page won’t appear in search results.

Usage:

<meta name="robots" content="noindex">nofollow:

Purpose: Directs search engines not to follow the links on the page or give any credit (in terms of SEO “link juice”) to the linked pages. It’s worth noting that the behavior of this directive has evolved over time. Originally, it meant not to pass any authority to the linked page. Now, it’s more about not endorsing the link, but search engines might choose to follow it for discovery purposes.

Usage:

<meta name="robots" content="nofollow">noarchive:

Purpose: Prevents search engines from storing a cached copy of the page. As a result, users won’t see a “Cached” link in the search results for that page.

Usage:

<meta name="robots" content="noarchive">nosnippet:

Purpose: Tells search engines not to display a snippet (like a meta description) or a preview (like a video preview or an image thumbnail) for the page in the search results. It also can prevent search engines from displaying the page content as a featured snippet in search results.

Usage:

<meta name="robots" content="nosnippet">Each of these tags serves a distinct purpose, though they can be combined in a single meta tag if you wish. For example:

<meta name="robots" content="noindex, nofollow, noarchive, nosnippet">This meta tag would instruct search engines not to index the page, not to follow links on the page, not to cache the page, and not to display snippets or previews of the page in the search results.

Remember, these directives are only “requests” to search engines. While major search engines like Google respect them, they’re not absolutely binding, and there’s always the possibility that a search engine could choose to ignore them. But in practice, these directives are typically honored by all major search engines.

Noindex SEO Implications

The tag has several implications in terms of SEO (Search Engine Optimization). Here are some of the most important considerations:

| Exclusion from Search Results | The primary function of the noindex tag is to prevent a page from appearing in search engine results. When you use this tag, you’re effectively telling search engines to exclude the page from their index. |

| Avoiding Duplicate Content | If you have pages with duplicate or very similar content, noindex can be useful to ensure that you don’t face any penalties or dilution in ranking because of duplicate content. For example, if you have printer-friendly versions of webpages or if you syndicate content from other sources, you might want to use this tag to prevent these pages from being indexed. |

| Preserving Crawl Budget | For websites with a large number of pages, search engines allocate a certain ‘crawl budget’. This is the number of pages they will crawl on your site within a certain timeframe. By using noindex on pages that don’t have much value (e.g., outdated content, internal search results, or system-generated webpages), you can ensure that search engine bots spend more time crawling important, valuable pages, which is important for your SEO. |

| Maintaining Site Quality in SERPs | Using noindex on low-quality pages or links that don’t provide unique value can help maintain the overall quality of your website in the eyes of search engines. This can be particularly useful if you have ‘thin’ pages with little content or links that might be seen as doorway pages. |

| Temporary vs. Permanent Removal | While noindex can prevent a page from appearing in search results, it does not delete the page from your website. If you want to bring the page back into search results in the future, you can simply remove the noindex tag. If you want a page to be permanently removed, you’d have to use more drastic measures like deleting the page and serving a 410 Gone status. |

| Conflicts with Canonical Tags | If a noindex tag is used on a page that’s designated as the canonical version of a piece of content, it can send mixed signals to search engines. It’s typically not recommended to combine noindex and rel=canonical on the same page. |

| Potential for Misuse | If applied accidentally or without understanding, the noindex directive can cause significant pages or even entire sections of a website to disappear from search results. It’s essential to use this tag with caution and regularly review its application. |

| Does Not Prevent Crawling | It’s important to understand that noindex prevents indexing, but does not stop search engines from crawling the webpage. If you wish to prevent a page from being crawled and indexed, you should use both noindex and nofollow tags and potentially even use the robots.txt file to disallow crawling. |

In conclusion, while the noindex tag can be a powerful tool in the SEO toolkit, it’s crucial to apply it carefully. Incorrect or overuse can lead to unintentional consequences, potentially harming a website’s visibility and traffic. Always review and audit your use of such directives regularly

Troubleshooting and Solving noindex Tag Errors

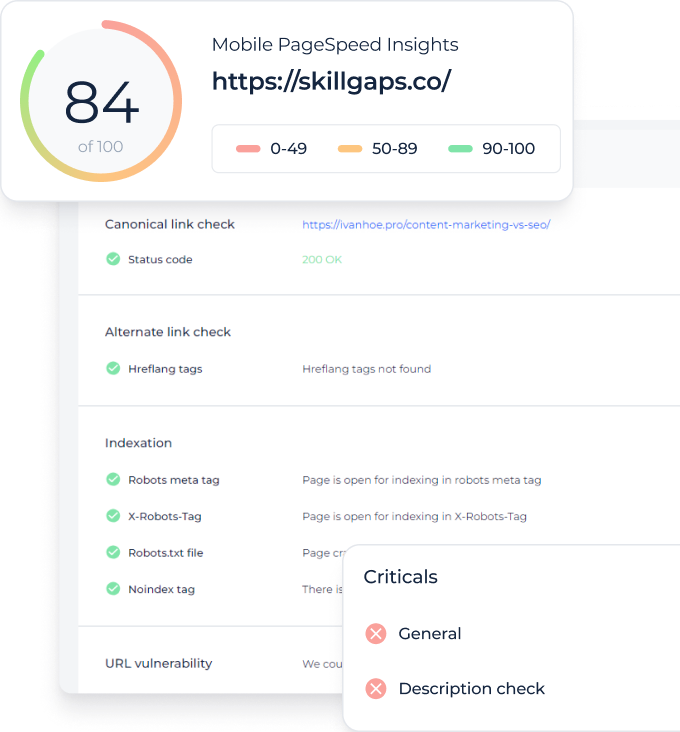

When optimizing websites, it’s essential to be vigilant about potential errors that can hinder site visibility and user experience. The noindex tag, while beneficial in certain scenarios, can sometimes be misapplied or mismanaged, leading to unintended SEO implications. Here are some common issues related to this tag and how to resolve them:

Noindex Tag Applied Accidentally

Unintentionally applying a noindex tag can cause vital pages to disappear from search results, severely affecting organic traffic and visibility.

Conflicting Directives in Robots.txt

Having both Disallow and Allow directives for the same URL in robots.txt can send mixed signals to search engines, potentially leading to indexing errors.

Improper Implementation of Noindex

If the noindex tag isn’t correctly implemented, for instance, being placed outside the <head> section, it may not be recognized by search engines.

Noindex Tag in HTTP Headers

Sometimes, the noindex directive might be sent via HTTP headers without your knowledge, causing pages to be excluded from search results.

Noindex in Bulk Actions or Plugins

CMS systems or SEO plugins might apply those tags in bulk, for instance, on all archived pages, without you realizing it.

Conclusion

The “noindex” tag in SEO tells search engines not to index specific webpages, ensuring they don’t appear in search results. It can be applied for various purposes, like excluding duplicate or confidential content. The tag can be set using an HTML meta tag or an HTTP header. While there are other SEO directives, like “nofollow” and “noarchive”, each serves a unique role. Although these directives provide clear instructions, major search engines like Google generally respect them, but they're not infallible. Misusing “noindex” can lead to SEO setbacks, affecting website visibility. Regular audits and the use of tools like Google Search Console are crucial to ensure proper implementation and to detect errors.