What is Pagination Define?

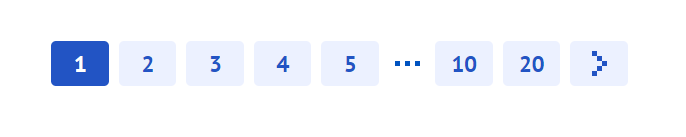

Pagination is an ordinal numbering of pages, which is usually located at the top or bottom of the site pages. In most cases, it is used for main pages and partitions. It often looks like this:

Pagination makes users’ life simpler and more convenient when it comes to distributing products on the website in a measured manner. Imagine an eCommerce website with a catalog consists of several hundred products of different categories placed on one single web page. Will that confuse the user? Definitely. So what is pagination in web development on practice?

Let’s look at some of the potential problems that arise when you use pagination, without paying attention to certain issues:

Pagination Meaning for SEO: What Problems Can Appear

Pagination issues often appear on eCommerce websites across multiple pages, blogs, forums due to a great number of web pages. The most common problems are related to user experience (UX) and search engine indexation.

Limit search engines visits for your site

When search engines crawl your site, the depth and number of pages they visit at a time will vary, depending on the search engine results pages, site’s trust, the content update rate, etc. And, if you have a huge number of pages with pagination, then the likelihood that search engines will go through all pages of pagination and index all the end pages (goods/articles) is significantly reduced. Also, the limit will be spent on visiting pagination pages, and not on visiting really important pages of the site.

But be careful, since Google has not supported rel=”prev”/”next”, there is a chance you can disrupt important paginated content when pages are indexed.

Problem with duplicates

Depending on the structure of your pages with pagination, it is very likely that some pages may contain similar or identical content. In addition to this, you will often find out that you have the same SEO title and meta description tags on multiple pages of your site. In this case, duplicate content on website can cause difficulties for search engines when it’s time to determine the most relevant pages for a particular search query.

How Pagination Affects Site Navigation

Pagination plays a pivotal role in shaping the way users navigate a website, influencing their overall experience in several ways:

- Direction and Structure: Pagination offers a clear structure to content-heavy websites. Think of it as chapters in a book or episodes in a series. It lets users know where they are in the content and how much is left, giving them a sense of control and predictability.

- Overwhelming Content: Without pagination, a user could be faced with an endless scroll of content. This can be overwhelming, especially when they’re looking for specific information. Pagination breaks the content into digestible chunks, making it easier for users to process and understand.

- Retention and Engagement: Continuous scrolling can lead to content being quickly skimmed rather than properly read. Pagination prompts users to make small commitments at intervals (clicking to the next page), which can boost retention and engagement.

- Progress Tracking: Pagination allows users to remember where they left off. If a user is on the 5th page of a blog, for instance, they can easily return to where they stopped, which isn’t as straightforward with infinite scrolling.

However, poorly designed pagination can disrupt the user experience. If page numbers are too close together or if there’s no clear way to move to the next chunk of content, users can become frustrated.

Balancing Usability and User Preferences

The challenge with pagination is striking the right balance between usability and catering to user preferences.

- Shorter Load Times vs. Continuous Scrolling: A primary advantage of pagination is that it reduces the initial load time. Instead of loading all content simultaneously, only a portion is loaded, making the site faster. However, some users prefer the smooth experience of infinite scrolling, especially on mobile devices, despite the potentially longer initial load time.

- Click Efficiency: Forcing users to constantly click ‘next’ can be tiresome, especially if the content on each page is minimal. It’s essential to calibrate the amount of content on each page so that users feel they’re making progress with each click and not just moving through a never-ending cascade of pages.

- Remembering User Preferences: Some modern sites remember user preferences when it comes to viewing content. For instance, if a user selects to view 50 items per page, the site retains this preference for future visits, enhancing the overall user experience.

- Clear and Intuitive Design: Regardless of the pagination style, the design must be intuitive. Users should be able to easily identify and navigate to the next page or return to a previous page. Consider clear labels, spacing, and using familiar symbols.

In conclusion, while implementing pagination alone can significantly enhance site navigation and user experience, it’s crucial to be attentive to user needs and preferences. Regularly collecting feedback and analyzing user behavior can help refine and optimize the pagination strategy for better user experience.

Differences in Pagination Preferences for Mobile vs. Desktop Users

Pagination is greatly influenced by the device being used. Mobile and desktop devices have distinct differences that impact how users interact with content and navigate websites:

- Screen Size: The most apparent difference is screen size. Desktops offer a more extensive view, allowing users to see more content at once and making pagination more tolerable. On mobile, the smaller screen can make too many pagination controls cluttered and challenging to navigate.

- Interaction Method: Desktop users rely on a mouse and keyboard, making precise selections straightforward. Mobile users, on the other hand, use touch gestures, which require pagination elements to be larger and spaced out to avoid accidental clicks.

- User Expectation: On desktops, users are accustomed to and expect structured content divisions, making them more tolerant of pagination. Mobile users, familiar with social media platforms and apps, have grown accustomed to infinite scrolling, thus often expecting a more seamless browsing experience.

- Content Consumption Speed: Generally, mobile users skim content faster than desktop users. They’re often on-the-go and might not have the patience for extensive pagination.

The Rise of Infinite Scroll in Mobile Interfaces

Infinite scrolling has become synonymous with mobile browsing, primarily due to social media platforms and news apps adopting this design:

- Seamless Experience: Infinite scroll provides a continuous flow of content, making browsing smoother. Users can easily swipe up to access more content without the interruption of clicking a ‘next’ button.

- Engagement Boost: The endless flow of content can lead to users spending more time on a platform. The next piece of content is always immediately accessible, making it easy for users to keep consuming.

- Reduced Load Times: With infinite scroll, content is loaded in chunks. As users scroll, the next batch of content starts loading, ensuring that users don’t have to wait for an entire page of new content to load.

- Challenges: However, infinite scrolling on mobile isn’t without its challenges. Users might find it hard to navigate back to previous content or reach the footer of a website. It can also pose issues for users with limited data plans, as continuous loading can consume more data.

Technical Implementation

1. Implementing ‘noindex, follow’ for Pagination

For this solution, you’ll be adding a meta tag to the <head> section of paginated pages.

Code Example:

<meta name="robots" content="noindex, follow"/>Pitfalls:

- Forgetting Pages: Ensure you only exclude paginated pages beyond the first one. The main content page should remain indexed.

- Consistency: Ensure that all paginated URLs follow a consistent pattern to avoid missing any during the implementation.

2. Implementing ‘View all’ with rel=’canonical’

You’ll be directing search engines to treat the paginated pages as part of the ‘View all’ page.

Code Example:

<link rel="canonical" href="http://example.com/catalog/view-all.html"/>Pitfalls:

- Slow Loading ‘View all’ Pages: If the ‘View all’ page is large, it might be slow to load, which can harm user experience and SEO. Monitor page performance after implementation.

- Missing Canonical Tags: Ensure every paginated page has the correct canonical tag pointing to the ‘View all’ page.

3. Implementing rel=’prev’/’next’

This helps search engines understand the relationship between paginated pages.

Code Example:

For the first page:

<link rel="next" href="http://example.com/page2.html">For the second page:

<link rel="prev" href="http://example.com/page1.html"><link rel="next" href="http://example.com/page3.html">Pitfalls:

- Broken Chain: Ensure every page correctly points to the previous and next pages. A single mistake can break the chain, causing search engines to misinterpret the relationship.

- Changing URL Patterns: If URLs change (due to a website update or other reason), ensure the rel=’prev’/’next’ tags are updated accordingly.

4. Implementing AJAX and JavaScript Scrolling

In this method, as users scroll or click a “Show More” button, more content is loaded dynamically.

Code Example:

Using jQuery:

$(window).scroll(function() {

if ($(window).scrollTop() == $(document).height() - $(window).height()) {

// Load more content here

$.ajax({

url: "nextPageUrl",

type: 'GET',

success: function(data) {

// Append the data to your content container

$("#contentContainer").append(data);

}

});

}

});

Pitfalls:

- Overloading the Browser: Continuously adding content to a page without limits can cause performance issues.

- Navigation Issues: Users might find it hard to navigate to specific sections of the page.

- SEO Concerns: Ensure that the dynamically loaded content is accessible to search engines. This often requires additional SEO considerations, like generating a sitemap with all the accessible URLs.

Impact on Site Speed and Performance

1. Impact of Different Pagination Techniques

- Standard Pagination: This is the typical “previous” and “next” style of pagination where content is split across numerous pages.

- Impact: Generally positive, as loading smaller chunks of content reduces initial load time. However, users may experience loading times every time they click to a new page.

- ‘View All’ Page: A single page containing all content.

- Impact: This can significantly increase initial loading times as a lot of content must be loaded at once. On the flip side, once the content is loaded, users won’t experience additional waits as they navigate through it.

- Infinite Scrolling: As users scroll down, more content gets loaded automatically.

- Impact: Initial loading times are usually fast since only a portion of the content is loaded. However, as users scroll, additional content can cause lag if not implemented properly, especially on older devices or slow networks.

- AJAX/JavaScript Pagination: Content is loaded dynamically based on user actions.

- Impact: Similar to infinite scrolling, initial loads are swift, but the continual addition of content can affect performance over time. AJAX calls must be optimized to avoid lags.

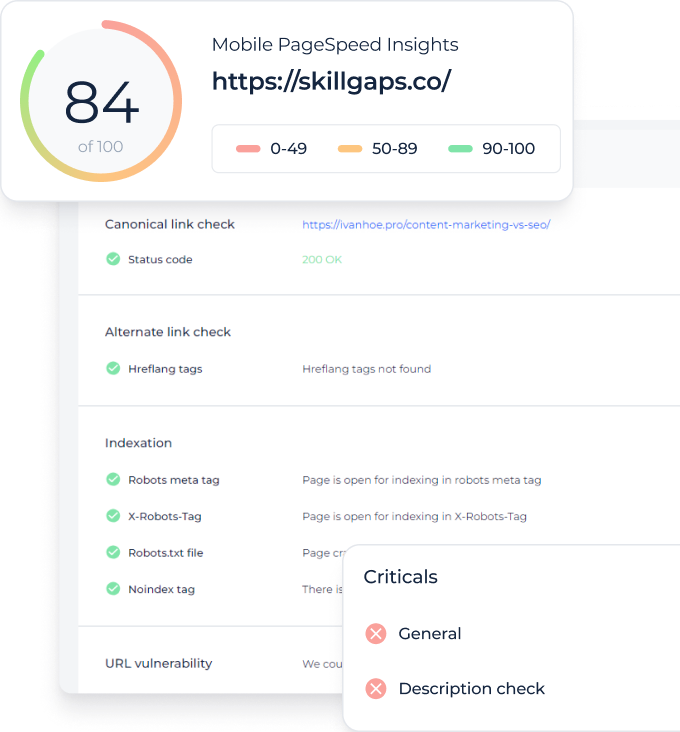

2. Tools to Measure Impact on Site Speed and Performance

- Google PageSpeed Insights: A free tool from Google that provides information about your site’s loading times and offers optimization recommendations.

- WebPageTest: Offers detailed insights into site performance, allowing for testing from multiple locations and different devices.

- GTmetrix: Gives comprehensive insights into page speed and performance, highlighting areas for improvement.

- Pingdom Website Speed Test: Analyzes the load speed of your site and helps identify bottlenecks.

- Browser Developer Tools: Modern browsers have built-in tools to analyze site performance, network requests, and page rendering speeds. The ‘Network’ and ‘Performance’ tabs in Chrome DevTools are especially helpful.

3. Best Practices to Mitigate Impact

- Optimize Content: Compress images, use web-friendly formats, and minify JavaScript and CSS files.

- Limit the Amount of Content Loaded: For infinite scroll or AJAX methods, ensure you’re not loading too much content at once. Load in manageable chunks.

- Lazy Loading: Use lazy loading for images and other media so that they only load when they’re about to come into the viewport.

- Optimize Server Response Times: Use CDNs, optimize server settings, and leverage caching mechanisms.

- Monitor & Iterate: Regularly monitor your site’s performance and make iterative changes based on real-world data.

Solution 1. Deleting Page Pagination From the Index with the Help of Noindex

In most cases, this method is a priority and can be implemented fast. The main point is in the exclusion of all pages of pagination from the index, except the first.

It is implemented in the following way:

<meta name="robots" content="noindex, follow"/>The meta tag is added to the HEAD section on all but the first page. Thus, we exclude all pages of pagination from the index, except the main page of the catalog and at the same time we ensure the indexing of all products/pages that belong to this catalog. Pay attention to such nuances:

- If you place the description text of the main page of the catalog, then it is still desirable to place it only on the first page.

- You should check that the first page URL is not duplicated. For example, when the pagination is implemented like this:

site.com/catalog?page=2

site.com/catalog?page=3

… site.com/catalog?page=N

you should add a link to the first page, in case you are not on the first page

site.com/catalog

and from this page

site.com/catalog?page=1

301 redirects to site.com/catalog have to be configured.

Pros

- suitable for Yandex;

- least difficult of all solutions;

- a great way to exclude all pages of pagination from the index, if there is no logical reason for including them in the index.

Cons

- although it solves the potential problem of pagination, at the same time, we exclude paginal content from the index;

- if there are many products, then if you do not use the XML sitemap, the products that are located deep in the directory will be indexed for a long time.

Solution 2. “View all” and rel=”canonical”

This method requires the use of Google to create a separate “View All” page, where all products/pages from this catalog are displayed, and on all pages of the pagination we put rel=”canonical” on the page “View all”.

Implementation of this method: after you have created the “View all” page (for example, it is site.com/catalog/view-all.html), then on all pages of the pagination you need to place the following into the HEAD section:

<link rel="canonical" href="http://site.com/catalog/view-all.html"/>Thus, we show the search engines that each page of pagination, is a part of the “View all” page so to speak. Google claims that:

- this is the most preferable for them method;

- users tend to view the entire category on one page at once (although this point is rather controversial and depends on the situation).

Nuances

The “View All” page should load rather quickly, preferably within 1-3 seconds. Therefore, this method is ideally suited for a category that has a number of pages with pagination from 5 to 20 and is not suitable for directories with hundreds of pages of pagination.

Pros

- priority method for Google;

- all the contents of the pagination will be located in the index of the search page through the page “View all”.

Cons

- not suitable if there are many pages or many quality pictures for products/articles;

- rather a complex implementation on most standard CMS.

Solution 3. Rel=”prev”/”next”

UPD: Note! According to Google Webmaster Central Blog, rel=”prev” and rel=”next” is not an indexing signal anymore.

Our last option to solve the problem with pagination can be the most confusing, but this is perhaps the most universal method for Google (Yandex does not take into account these directives). Since the implementation is rather complicated, you should be very careful when applying this method. Let’s see how this works.

For example, you have 4 pages in the directory. Using rel=”prev”/”next” you essentially create a chain between all the pages in this directory. This chain starts from the first page: for this you add to the HEAD section:

<link rel="next" href="http://site.com/page2.html">For the first page, this is the only attribute. For the second page, you must specify both the previous page and the following:

<link rel="prev" href="http://site.com/page1.html">

<link rel="next" href="http://site.com/page3.html">

For the third page, we do the same as for the second one

<link rel="prev" href="http://site.com/page2.html">

<link rel="next" href="http://site.com/page4.html">

When we are on the last page (in this case fourth), we should specify only the previous page in the chain:

<link rel="prev" href="http://site.com/page3.html">Using these rel=”prev”/”next” attributes, Google merges the page data into a single element in the index. Typically for users, this will be the first page, since usually, it is the most relevant page.

Nuances

- rel=”prev” and rel=”next” are for Google ancillary attributes, not directives;

- both the relative and absolute URLs can be used as values (in accordance with the valid values of the tag);

- if you specify a reference in the document, the relative paths will be determined based on the base URL;

- if Google detects errors in your markup (for example, if the expected value of the rel=”prev” or rel=”next” attribute is missing), further page indexing and content recognition will be performed based on Google’s heuristic algorithm;

- it should be checked that the first page URL is not duplicated.

Pros

- this method allows you to solve the problem of pagination without using “View all”;

- implementation occurs only with minor changes in HTML.

Cons

- these attributes are not taken into account by Yandex;

- implementation can be quite complex;

- inserting links in the chain of pages should be done very carefully.

But if Google stops indexing paginated pages with rel=”next” and rel=”prev”, how it handles pagination now?

Due to the latest Google Webmaster Office-hours Hangout is held on 22 March 2019, John Muller announced that paginated pages are treated like other normal pages on your website in Google’s index.

Solution 4. AJAX and Javascript Scrolling

You’ve probably come across endless scrolling of goods on e-commerce sites, where the products are constantly downloaded when scrolling to the bottom of the screen. Although this is an excellent opportunity to improve usability, this method has to be used correctly. It is desirable that the products are not automatically loaded when scrolling. Instead, add a button “Show more items” under the latest products. A good implementation of this method you can see on the wikimart.ru on the final branches of the directory.

Proper use of parameters

When you use the rel=”prev”/”next” attributes, pages with pagination can contain parameters that do not change the content:

- session variables;

- graduation;

- change the number of items per page.

In this case, we get duplicated content. To solve the problem you can use the combination rel=”prev”/”next” and rel=”canonical”. To do this, firstly, you need to make sure that all pagination pages with rel=”prev”/”next” use the same parameter. Secondly, for each URL with a parameter, it is necessary to register its canonical page without this parameter.

Proper use of filters and rel=”prev”/”next”

Now, let’s look at an example where we use the parameters by which we can/want to give out unique content, and it’s important for us to keep such filtered pages in the index. For example, we have a category with sneakers, and we want to create landing pages for search deliveries with different brands, using parameters in the URL.

In this case

- You do not need to use rel=”canonical” in the main category since the content is unique;

- create for each brand their unique chains based on the attribute rel=”prev”/”next”;

- write the unique and relevant title

Alternative Pagination Methods

As websites and applications evolve, developers and designers are continually experimenting with innovative pagination techniques to enhance user experience. Here are some lesser-known but intriguing methods, along with their advantages and disadvantages:

1. Content-Aware Pagination

Description: This technique involves splitting content based on its context rather than a fixed number of items or data size. For instance, an article might be split based on subheadings rather than an arbitrary word count.

Pros:

- Provides a more coherent and logical reading experience.

- Reduces the chance of breaking up important information between pages.

Cons:

- More complex to implement because it requires understanding the content.

- Could lead to uneven page lengths.

2. Non-linear Pagination

Description: Instead of offering the traditional sequence (1, 2, 3, …), non-linear pagination might jump based on user behavior or content categories. For example, a tutorial website might offer pagination based on difficulty levels.

Pros:

- Tailored to the user’s journey or content categories.

- Can provide a more customized and relevant experience.

Cons:

- Can be confusing for users expecting a standard linear progression.

- Requires more intricate user behavior analysis.

3. Gesture-Based Pagination

Description: Primarily found in mobile apps or e-readers, this method uses swipes, pinches, or other gestures to navigate content.

Pros:

- Provides a smooth and natural navigation experience on touch devices.

- Eliminates the need for visible pagination controls

Cons:

- Might not be intuitive for all users.

- Relies heavily on the responsiveness and sensitivity of the touch interface.

4. Adaptive Pagination

Description: This method adjusts the amount of content displayed based on the user’s behavior. If a user tends to scroll through content rapidly, the system might load more content per page, and vice-versa.

Pros:

- Personalizes the browsing experience to individual user habits.

- Can enhance content consumption for users by providing the “right” amount.

Cons:

- Requires tracking and analyzing user behavior in real-time

- Might misjudge user intent at times

5. Content-Driven Visual Pagination

Description: Instead of standard numbered buttons or links, this method uses visual cues related to the content. For example, a fashion e-commerce site might use color swatches or product images as pagination elements.

Pros:

- Provides a visually engaging and intuitive experience.

- Can offer users insights into content before they navigate to the next page.

Cons:

- Requires higher-quality visual assets and might be resource-intensive.

- Could be seen as gimmicky or cluttered if not done elegantly.

While these alternative pagination methods aren’t as prevalent as standard techniques, they offer unique ways to enhance user experience and engagement. When considering one of these methods, always prioritize usability testing to ensure it aligns with the expectations and preferences of your target audience.

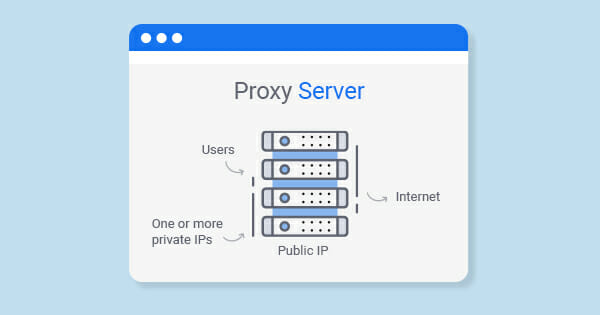

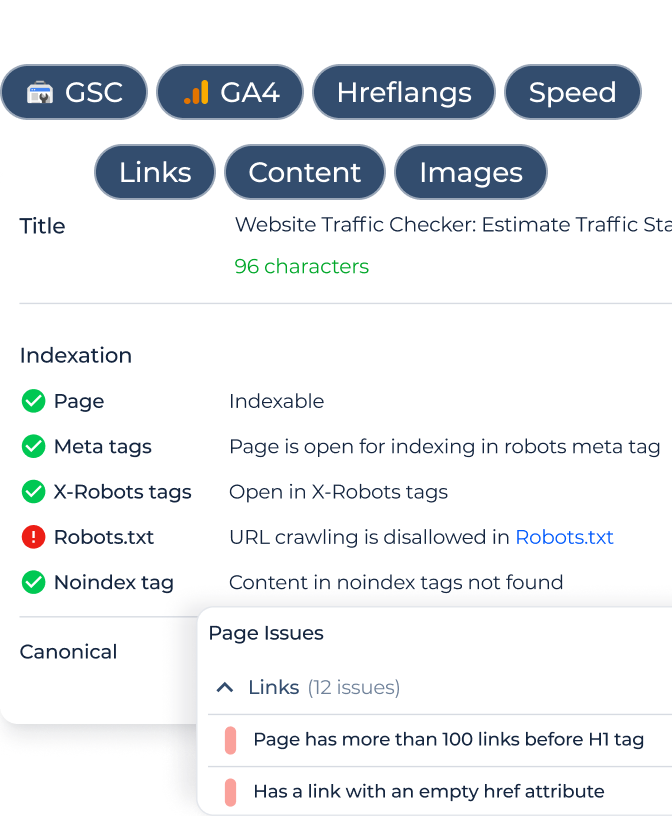

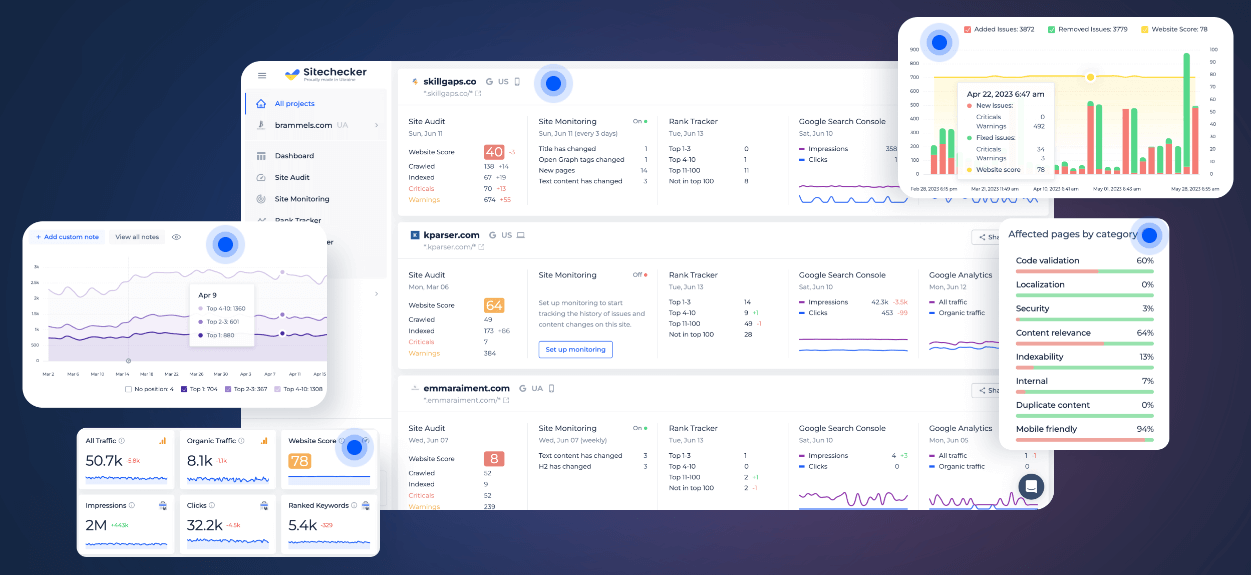

Monitoring Technical Health of Your Website with our SEO Audit Tool

The Site Audit tool by Sitechecker provides a detailed analysis of websites, evaluating various elements to identify strengths and improvement areas. Ideal for SEO professionals and website owners, it offers clear, actionable insights to enhance site visibility and functionality.

Beyond its foundational audit capabilities, the tool boasts a range of advanced features. Users benefit from its user-friendly interface that highlights issues with prioritized recommendations. Additionally, the tool breaks down complex technical jargon, ensuring that even beginners can understand and address identified issues. With consistent use, you’re not only informed about your site’s health but also empowered to make strategic enhancements.

Unleash Your Site's Potential!

Discover, refine, and optimize with our comprehensive Website Checker & Audit tool.

Conclusion

To conclude, here are our recommendations for solving the problem with pagination:

- if you have the technical ability to create the page “View all” (such pages are quickly loaded and not very large in size, check webpage size before and after implementing), then you can use this option since Google recommends it, and Yandex understands the directive rel=”canonical”;

- but probably in most cases the best option is to associate the use of the rel=”next page/prev page” attribute (Google understands it) as well as the robots=”noindex, follow” meta tag (website metadata both Google and Yandex understand).

Also in addition you can get acquainted with a report by Adam Gent at the BrightonSEO conference.